Working on a book about how we think, I’ve spent a lot of time studying how our thinking tends to fail. One pattern frequently shows up. We flatten complex issues into two camps.

Spencer Greenberg’s team at Clearer Thinking lays it out cleanly in a 2023 essay about binary thinking: we collapse statements into true or false, things into good or bad, people into them or us. We pick a side, and we settle in.

This mindset ignores the nuances of the world and runs against systems thinking. As I explore in my upcoming book, systems thinking aims to align our mental models with reality. Sometimes reality really does have two sides, and a binary view is appropriate. But more often than not, the world has more grey in it than we like to admit.

Right now, one of the loudest dichotomies in public discourse is about AI. On one end is a camp that calls it a force multiplier — the greatest technology ever invented, and one we’d be foolish not to embrace. On the other end is a camp claiming that any use of it hollows out what makes us human. As is often the case in binaries, both poles are loud. And both are reductive. I sit in the middle, convinced of AI’s upside but also concerned about its cost and the second-order consequences for society.

Today’s essay is a look at how I’m experimenting with AI — using it to amplify my productivity and thinking, while keeping enough guardrails that it doesn’t start thinking for me.

Over the last 18 months, I’ve written a series on this:

In that final piece, I acknowledged that the AI layer wasn’t quite ready.

Notion AI promises to bring everything together, but the model implementation isn’t quite there yet. I’ve found that it’s great when working with the text on a single page. But when I try to prompt it to bring multiple pages together or find pages that fit certain characteristics, its execution is inconsistent.

Since that last essay, the AI layer has improved dramatically. And it’s far more useful with organized infrastructure and purposeful workflows.

While this space continues to evolve, the one constant has been the knowledge itself — my notes and insights that make up my second brain. The idea of taking notes hasn’t changed much since Leonardo da Vinci filled his notebooks in 15th-century Italy.

What has always made distillation and synthesis hard is the effort required to dig through those notes and generate true insight. The effort is the friction. Some friction is good — it causes us to do the hard work required to learn. But too much stops us in our tracks.

That excess friction is the gap between knowing and acting.

Friction is the Enemy of Compounding

I’ve been tinkering with a second brain system since 2022. It holds years of ideas, decisions, and plans. Hundreds of book annotations. Project archives. Meeting notes. Half-finished essays. As it has grown, finding things in it has become a slog. I rarely revisit notes older than a few months. Not because those notes aren’t valuable, but because digging back through them takes too long.

This isn’t a new problem. Or a me problem, for that matter. As I wrote in my commonplace book essay, this struggle has played out across centuries. Whether it’s in the form of a physical notebook or a digital one, the friction of returning to old notes is real. The tools change, but the problem of finding old material and making any valuable use of it persists.

With enough effort, of course, true synthesis is possible. But often the cost of searching is more than the expected payoff if and when we find what we’re looking for. Opening old notebooks, rereading marginalia, and chasing half-remembered quotes across years of notes is real work. And most of the time, we come up empty — or with a marginal insight that wasn’t worth the effort.

Yet if we’re not going to return to our old notes, what’s the point in having them? A second brain only compounds if you return to it. And you’ll only return if the friction is low enough that surfacing old knowledge doesn’t burn an afternoon.

This is the paradox we have to solve. Most PKM advice optimizes the front of the pipeline — capture and organization. Those were once real bottlenecks, and technology has largely solved them. But as any operations professional will tell you, fixing one bottleneck rarely eliminates the problem. It just shifts the choke point to another part of the process. Now the bottleneck has moved further down the pipeline.

Last year, I broke down the PKM pipeline into a framework called CODER (derived from Tiago Forte’s CODE framework). In each of these phases, we can use technology to ease the friction.

In the Capture phase, we use voice memos, mobile capture, and browser clippers to pull information into our systems with minimal friction.

In the Organize phase, we use folder structure, tags, and automation rules to make our knowledge easier to find.

And in the Retrieve phase, modern tools like search and backlinks surface our content when we need it.2

That leaves the two middle phases — Distill and Express.

Reducing friction here is harder, because this is where the real thinking lives. These phases require real time. They’re where we turn raw material into something valuable.

This is the leverage point of the system. It’s pulling threads from different notes and combining them into our own insight.

That’s where AI tools are starting to surface use cases that compound. But before we get into those use cases, it’s important to set some boundaries about how to use AI without outsourcing our agency to it.

Use AI, Don’t Let AI Use You

Last year, I read You Are Not a Gadget, a technology manifesto by Jaron Lanier — computer scientist, virtual-reality pioneer, and longtime skeptic of what software does to its users. During my read, I underlined a sentence (captured in one of my Zettelkasten sessions) that he wrote that I’ve been thinking about recently:

“When people are told that a computer is intelligent, they become prone to changing themselves in order to make the computer appear to work better, instead of demanding that the computer be changed to become more useful.”

Take the first half of that sentence: “changing yourself to make the computer appear to work better.” Translated, that means outsourcing your thinking. Treating model output as your own conclusion. Letting it draft the first version of your judgment and accepting it without examining it. This is the atrophy I warn about in the introduction of my book and a chief objection of AI skeptics.

This is a valid concern. I’m worried about it too. But as I also note in my book’s introduction, I’m an optimist. So I choose to focus on the second half of this sentence: “demanding that the computer be changed to become more useful.”

To me, that means making sure that AI fits my workflow, not the other way around. The shape of my work was set long before I had software that could help with it. I should conform the tool to that shape and direct it. The agency stays with me.

I can have concerns about the technology while finding ways to use it to amplify my thinking, not replace it.

Refusing to engage with the technology risks getting left behind. As Wharton professor and AI evangelist Ethan Mollick is fond of saying: “Today’s AI is the worst AI you will ever use.” That was true when ChatGPT was first released 3 ½ years ago, and it is true today.

Step back and look at AI’s exponential growth, and it’s obvious the use cases will keep expanding. Do you have an older relative who struggles to use an iPhone? That’s what’s at risk from boycotting the technology. It’s self-limiting. That’s not Stoic. That’s just stubborn.

It’s okay to use AI while still having concerns about its implications. Once we acknowledge that, the question then becomes: How do we use it while maintaining our own agency?

The infrastructure I’ve developed is my answer to Lanier’s challenge. It’s the dichotomy of control applied to a new tool. AI’s existence isn’t up to me. How I engage with it is.

The Infrastructure of My Second Brain

For the last four years, I’ve used Notion as the hub for my second brain. I use it for task management, meeting notes, project plans, and almost everything else related to storing my digital knowledge.

Then, about a year ago, I started using Obsidian. It began as a place to organize my book research, but eventually became the home for everything I extract from what I read. I liked the ease of working with Markdown files and linking them together through Obsidian’s minimalist interface.

Over the last month, I’ve experimented with an evolution that takes on more of what Notion used to do for me.

What I’ve been testing can be simplified into three layers:

Layer 1: Markdown

A second brain is, at its core, a collection of notes. That was true with my Notion system. It is true with the notecard system that Robert Greene and Ryan Holiday use. And it’s still true with this updated architecture within Obsidian.

In this system, every note is a Markdown file with a .md extension. For the less technically savvy out there, Markdown is not a proprietary format. It is a text file that can be opened by virtually any text editor. This means that, unlike the notes in a tool like Notion, which can only be read within that platform, I’m not locked into any vendor or software. If Obsidian closes up shop tomorrow, I can simply package up my files, which sit in folders on my Mac, and move them to a new tool. I never have to worry about which tool I use to read them. The files are universally readable across devices.

Markdown in practice. Plain text with a few formatting cues — readable on all devices.

Layer 2: Obsidian

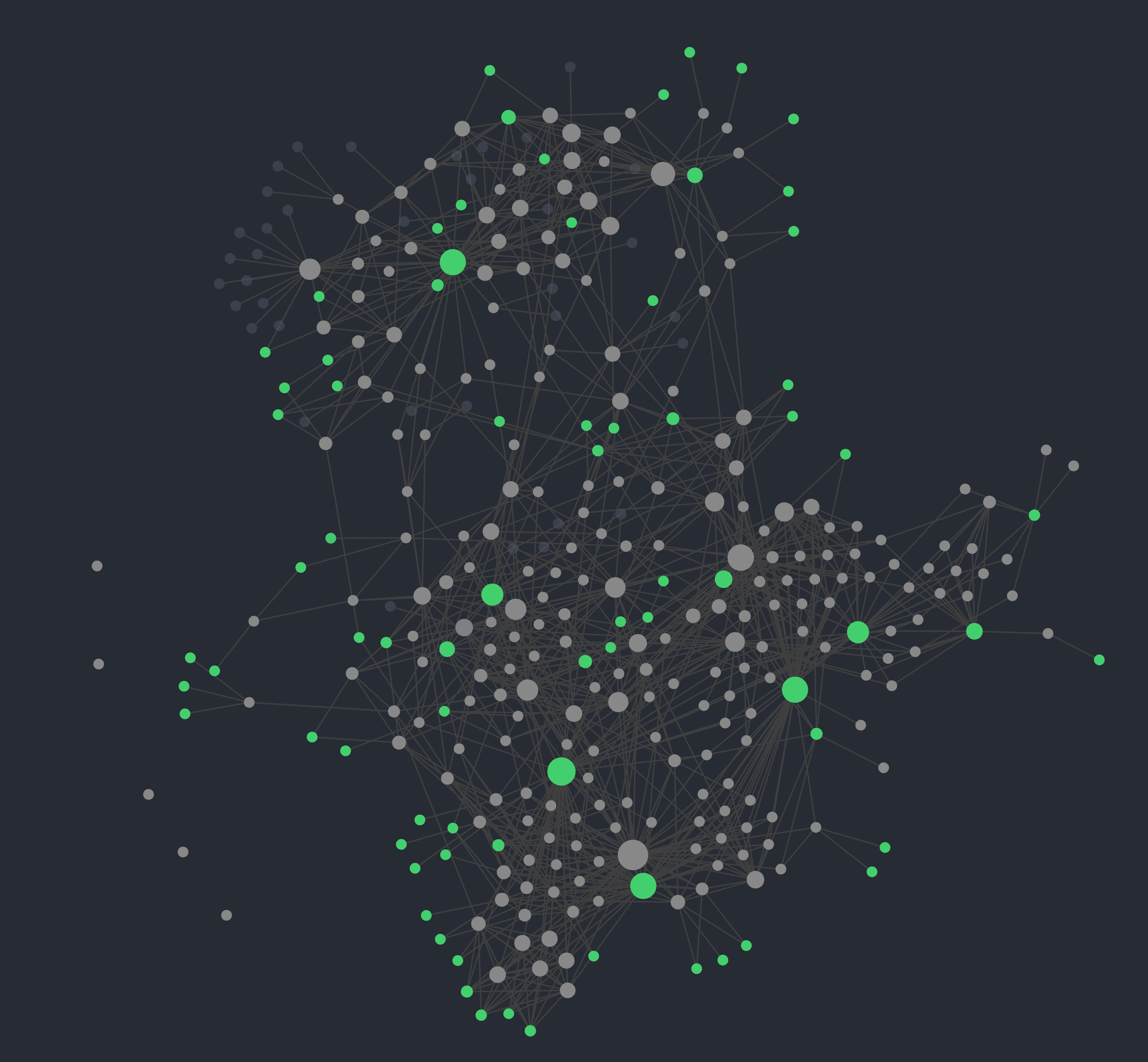

I introduced the benefits of using Obsidian in my essay last month, but in short, it sits on top of my markdown files and treats them as a graph. Native wiki-links connect notes to other notes. Backlinks show me everything that points to a given note from somewhere else in my file vault. Those two features turn a folder of files into a navigable map.

The graph view of my Obsidian vault. Every link is a decision I made — a judgment about what connects to what.

The important thing about this map is that I drew it. Every link in my vault is a decision I made — a judgment about what connects to, reinforces, or extends something else. The work of linking is manual, which is the point. Those decisions aggregate into a structure that reflects how I think.

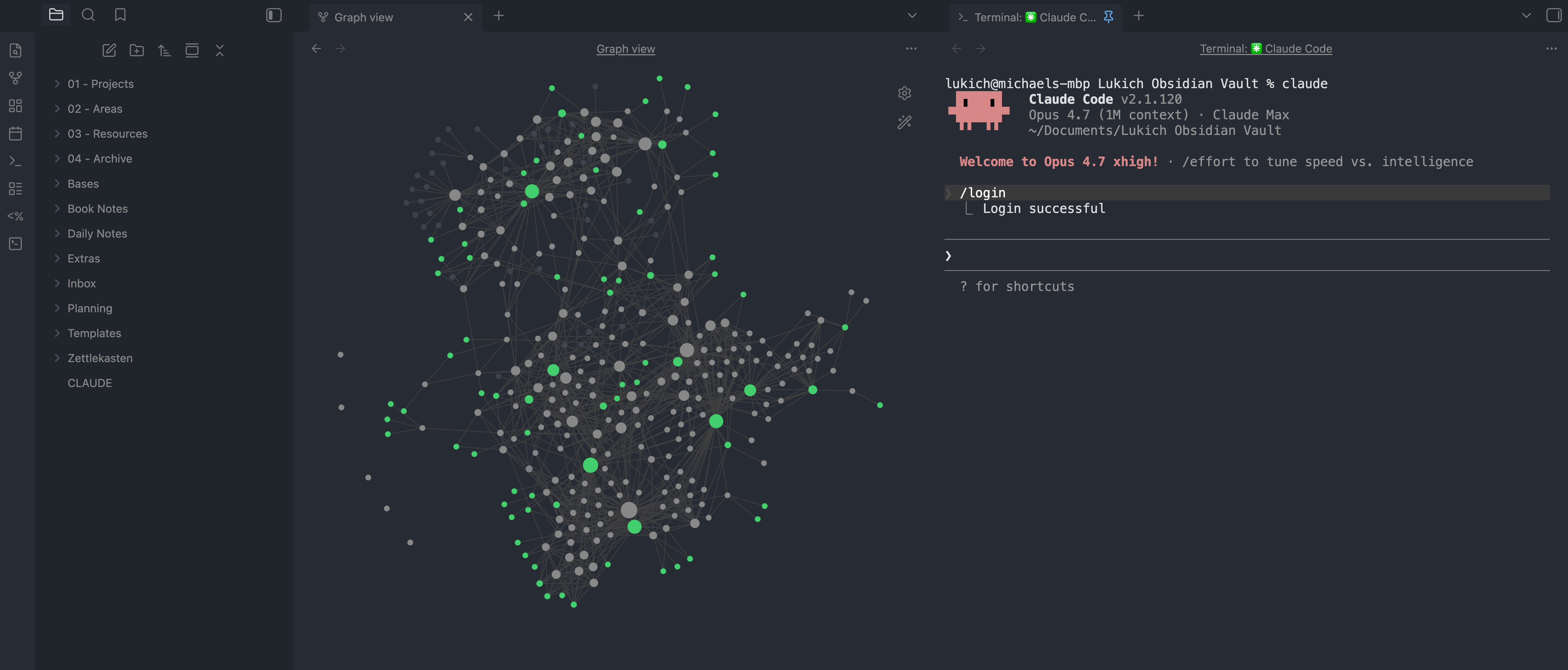

Layer 3: Claude Code

The two layers above are powerful on their own. But add a processor that can navigate the map, and something new emerges.

Remember the issues I had with Notion AI sorting through my various notes? To be fair, that was a year ago, which is a lifetime in AI’s short public evolution. Notion has advanced its own AI capabilities. But from all my testing, it still felt clunky. Since shifting to this setup, it feels like a meaningful upgrade.

With Claude Code, I can direct it to read my Layer 1 notes and pull the Layer 2 graph in as context. From there, I can compare notes, find connections, and trace threads across my knowledge vault.

By developing a set of configuration files that provide enough context — such as my philosophy, conventions, and goals — I can customize the AI to work for me. The processor ingests those files at the start of every session and operates within the frame they describe. The outputs are downstream of what I put in.

The three layers in one workspace — markdown files on the left, the Obsidian UI in the middle, Claude Code on the right.

The sum of this architecture is plain text I own. A graph of connections I made. And a processor I direct.

I decide what matters, and the processor operates accordingly.

Where the Friction Drops

To close out this look into my monthlong experiment, here are a few examples of what changes when the three layers work together, each from a different part of my life.

Planning Across Time Horizons

In my segmented planning essay, I explained my hierarchy of time horizons, which I use to cascade from life visions to daily tasks:

Vision → Year → Quarter → Month → Week → Day

Each time horizon included a set of review and planning sessions. This helped me incorporate feedback and adjust the course, ensuring I never strayed too far from the path. Templates helped, but the setup time was high. Remembering everything that mattered from the prior quarter — let alone the prior year — was hard.

I’ve noticed that my monthly, weekly, and daily reviews have gone much more smoothly this past month. I uploaded my templates into context folders and defined specific processes (Claude Code refers to them as skills) that guide me. Claude can ingest that and sort through all relevant notes — vision documents, prior reviews, project archives, and my calendar.

My weekly plan pulls from the current month and the prior review. My daily plan pulls from the weekly view, the calendar, and priorities I’ve flagged. Each level inherits from the one above. Claude surfaces the relevant knowledge for me. This saves me hours I’d otherwise spend digging through old notes and pulling material I might have missed entirely. And if, by chance, it misses something — these tools are still fallible — I can add what’s missing or cut what doesn’t belong.

The cascade compounds because my retrieval cost dropped. I can spend more of my time making decisions and executing.

Connections Across Domains

Last month, I read Labyrinths by Jorge Luis Borges. That book led me to think about Benoit Mandelbrot and inspired me to polish my writing on the coastline paradox into an essay. While researching, I prompted Claude a few times to scan my notes for tangential connections.

It surfaced some connections that I doubt I would have made independently. There are too many to share here, so I’ll stick with one between two notes from two different worlds.

I’d forgotten that the first note was there or that I had captured it. Last summer, I read Ryszard Kapuściński’s Travels With Herodotus, one of my top books of 2025. In the book, he writes a lovely line about his time in India:

India is all about infinity — an infinity of gods and myths, beliefs and languages, races and cultures; in everything and everywhere one looks, there is this dizzying endlessness.

I captured this in my digital book notes with an annotation of my own: “Another systems thinking related reference. It’s all systems and subsystems. Related to [[fractals]].”3

The second note was from a systems thinking book by Derek and Laura Cabrera called Connect the Dots:

Every dot is a whole world in and of itself, containing networks of dots and connections inside it… If you zoom in on any dot, all elements are embedded in it.

The same fractal Kapuściński saw in India is formalized by the Cabreras’ research. The connection was always there in my notes. The system surfaced it.

Pre-Meeting Briefings

My first two examples are from personal projects. But for most of the week, I’m an analytics executive at a marketing agency. My days are busy. I’m a stakeholder in multiple projects across the agency, and most weeks include many team meetings, 1:1s, and executive readouts with people within and outside my organization. Each meeting requires context from a different domain — project histories, recent decisions, and threads from other conversations.

Before each meeting, I’d prepare by rereading project documents, skimming the past few weeks of notes, scanning recent messages in Microsoft Teams threads, and recalling the latest decisions.

This past month, I’ve developed a new routine: using Claude Code to generate a meeting briefing. The process pulls relevant project notes, meeting notes, recent decisions, and transcripts from prior meetings, and synthesizes them into an organized 1-pager with the information I need to be effective. What used to take thirty minutes of scattered prep now takes five. I enter meetings oriented, starting the conversation with value rather than wasting time on a recap.

A constant theme runs through these workflows. The underlying notes are mine. I provide the raw data, the links, and the structure. I also direct the AI to engage using standard playbooks, frameworks, and workflows. I’m making the computer useful for me — fitting it into my own workflows and using it as a partner, not a replacement.

I wrote in my book that thinking is one of the most uniquely human skills. We cannot afford to give that ability away. But with the right structure and a clear set of principles for how we engage with technology, we can use these tools to challenge, enhance, and continue developing our thinking.

Wrap-Up & Book Updates

I’ve been using this updated system for a month now, and I’m impressed. I turn to it more often and open Notion less. I’m not sure I’ll move everything over yet, but the benefits so far have been real, and I’ll keep testing.

This is what the grey zone of AI looks like in practice — useful, deliberate, and still mine.

A quick note for those who made it this far. The Stoic Systems Thinker is in the final stretch of layout editing. I expect this phase to wrap within the next week. From there, things will move quickly. Layout completion is one of the final steps before the book goes to print. I’m hoping that by my next essay, I’ll finally have publication dates to share. The finish line is in sight.

If you’re building something like the infrastructure I described in this essay, please reach out and share it. I love hearing how people are building these days — purposefully, on their own terms.

All the best,

Michael

1 A “second brain” is an idea popularized by Tiago Forte in his 2022 book, Building a Second Brain. The name is more playful than precise — different people use it to mean different things. At its core, it’s just a purposefully organized body of knowledge you can come back to when you need it.

2 These tools are less helpful if you have poor structure, making this phase a secondary optimization point behind organization. Put simply: If your files aren’t organized in a structure you understand, you’ll struggle with retrieval.

3 These brackets are purposeful and are how they appear in my digital notes. They represent Wikilinks, a style of linking documents that’s incredibly useful for building an emerging network of knowledge, such as Wikipedia or your digital knowledge management system.